Authors: D. Rodrigues Da Costa, P. Vasseur, F. Morbidi

Affiliation: MIS laboratory, University of Picardie Jules Verne, Amiens, France

Email (corresponding author): daniel.rodrigues.da.costa[at]u-picardie.fr

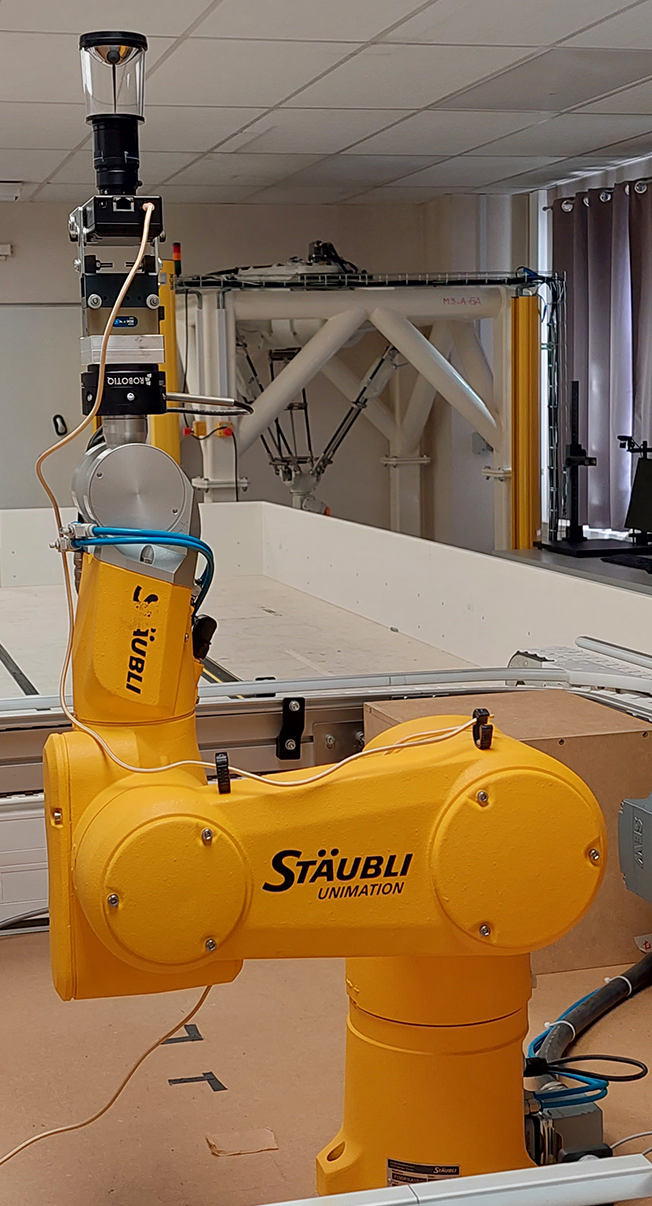

This page provides supplementary material which complements the results reported in [1]: extended paper with additional CARLA simulations (see Fig. 2), omnidirectional event datasets, Matlab code, camera calibration parameters and videos. In the experiments with the Stäubli TX-60 robot (see Fig. 1), an omnidirectional event camera consisting of a Prophesee EVK3-HD camera and a VStone VS-C450MRTK catadioptric objective (hyperbolic mirror) was considered. In the video comparing the three speeds of the Stäubli TX-60 robot, Sequence 1a, 1b, 1c and Sequence 2a, 2b, 2c are referred to as Sequence 1, Speed 25%, 50%, 75% and Sequence 2, Speed 50%, 75%, 100%, respectively. The percentages refer to the maximum achievable speed of the robot. Finally, in the experiment with the handheld catadioptric camera (see Fig. 3), an Xsens MTi IMU was used.

Extended paper

- Manuscript: pdf

Event datasets

- Synthetic data (CARLA simulator)

- Urban trajectory [3-DoF]: Data

- Real data (Stäubli TX-60 robot)

Matlab files

- Gyrevento: Code

Calibration of the catadioptric event camera

- Images and parameters: Data

Videos

- Synthetic data (CARLA simulator)

- Urban trajectory [3-DoF]: Video

- Real data (Stäubli TX-60 robot)

- Real data (Catadioptric event camera and IMU)

- Sequence 3 [6-DoF]: Video

References

[1] Gyrevento: Event-based Omnidirectional Visual Gyroscope in a Manhattan World, D. Rodrigues da Costa, P. Vasseur, F. Morbidi, IEEE Robotics and Automation Letters, vol. 10, n. 3, pp. 2910-2917, March 2025 (to be presented at the IEEE/RSJ International Conference on Intelligent Robots and Systems, Hangzhou, China, October 19-25, 2025) [pdf]

[2] Vision Événementielle Omnidirectionnelle: Théorie et Applications, D. Rodrigues da Costa,

Acknowledgements

This work was supported by AID (''Agence de l'Innovation de Défense''), through the research project EVENTO, ''Omnidirectional Event Cameras for High-Speed Robots'' (2021-2024) and by the French and Austrian National Research Agencies through the EVELOC ''Event-based Visual Localization'' project (ANR-23-CE33-0011)